One common justification governments give for limiting freedom of speech is the need to prevent false information, often about the government itself. Thankfully, in the United States, the First Amendment prevents government from acting as the arbiter of truth, particularly in the context of political speech.

Recently, politicians have become concerned about potential widespread distribution of “deepfakes” of candidates and public officials. In the political context, the term “deepfakes” most commonly refers to seemingly realistic, but altered, visual or audio media appearing to show candidates doing or saying something that they did not in fact say or do. The impulse to address potential nefarious electoral use of such technology is understandable. But, as history has shown, punishing false or misleading political speech will inevitably suppress political speech generally and do more harm than good.

Despite widespread opposition from civil liberties advocates across the ideological spectrum, a few states have already enacted laws banning the distribution of “manipulated” media featuring candidates close to an election, and several proposals have been introduced at the federal level.[1] Before rushing to protect themselves from digitally altered media, lawmakers should consider the speech-chilling effects of overbroad regulation of edited content. Proposals aimed at regulating speech about government officials or candidates are likely to suppress political speech well beyond the intended target of intentionally deceptive content. Furthermore, such proposals are unlikely to pass constitutional muster.

1. Laws aimed at regulating or prohibiting deepfakes in the context of political speech are likely to run afoul of the First Amendment. The Supreme Court has consistently held that government may not punish speech simply because it is false. As the Court noted in a 2012 decision striking down a federal law prohibiting false claims about military honors as unconstitutional, permitting such laws “would endorse government authority to compile a list of subjects about which false statements are punishable. That governmental power has no clear limiting principle. Our constitutional tradition stands against the idea that we need Oceania’s Ministry of Truth.”[2] Yet politicians seeking to regulate political “deepfakes” would like to make an exception for expression on at least one subject: themselves. Determining whether particular content about candidates is “false” or “deceptive” is often highly subjective. This is why, as was stated in the Court’s 2012 opinion, “The remedy for speech that is false is speech that is true. This is the ordinary course in a free society.”[3] The potential for abuse of laws punishing false speech in the context of political campaigns is significant. Political speech receives the highest level of First Amendment protection, in part, because it is the most tempting for government officials to suppress.

2. The standards and definitions for what constitutes a “deepfake” or “manipulated media” are inherently subjective and vague. Many proposals to ban political deepfakes rely on a “reasonable person” standard. That is, the law punishes edited content that would be misleading to a “reasonable person.” This is impossible to determine in any objective manner. Would-be speakers will have no way of knowing whether their speech would cross that line. Such a law would likely create a flood of charges of political ads violating the law. Enforcement will be inconsistent, and speakers will be at constant risk. Candidates would regularly file complaints against their critics. Entities that sell advertising space, such as TV and radio stations, will likely respond by rejecting more ads, and online platforms will act overzealously to take down perfectly legal content. Restrictions on political speech are bad enough, but when nobody knows exactly what those restrictions are, the harm to freedom of expression is multiplied.

3. Virtually all political communications are edited in some way. Regulating this practice stifles speech. Proposals to regulate “deepfakes” have vague definitions that appear to cover many forms of editing. Forcing speakers to guess what crosses the line into regulated “manipulated” media will chill political speech and suppress creative expression. With certain editing practices criminalized or subject to harsh civil penalties, self-serving charges of presenting audio “out of context” or adjusting the speed of recorded video will likely be frequent. Litigation meant only to suppress speech or punish one’s political opponents will flourish, to the detriment of civic discourse.

4. Prescribed disclaimers will tar a speaker’s message by encouraging listeners to discount it as “fake.” Requiring “manipulated media” to carry a disclaimer labeling it as such is itself often misleading. Many proposals would force such labels on satire and simple editing and, in effect, compel speakers to tell viewers and listeners not to believe anything in the message. Including the disclaimer will always be the safest option for any political ad with any editing, no matter how minimal. Therefore, these disclaimers will interfere with speech and, in many cases, prove to be outright misleading.

5. Legal remedies to potential harms from deepfakes exist through state defamation law. All 50 states and the District of Columbia have laws against defamation. If audio or visual content is manipulated in a way that defames, those harmed may sue and can win judgments to have the content removed and obtain monetary awards for damages from perpetrators. There’s no need for an additional law that gives government power to punish a specific form of defamation when a civil remedy exists.

Conclusion

The technology that enables the creation of certain types of “deepfakes” is relatively new, but manipulated media is not. Fake or doctored photographs have been around for about as long as photography itself, and, of course, photo editing technology has become more sophisticated, more widely available, easier to use, and harder to detect. Several proposals supposedly meant to address deepfakes would call into question the legality of photo, audio, and video editing practices that have existed for decades. Furthermore, the ability to publish falsehoods and intentional misquotes has existed for centuries.

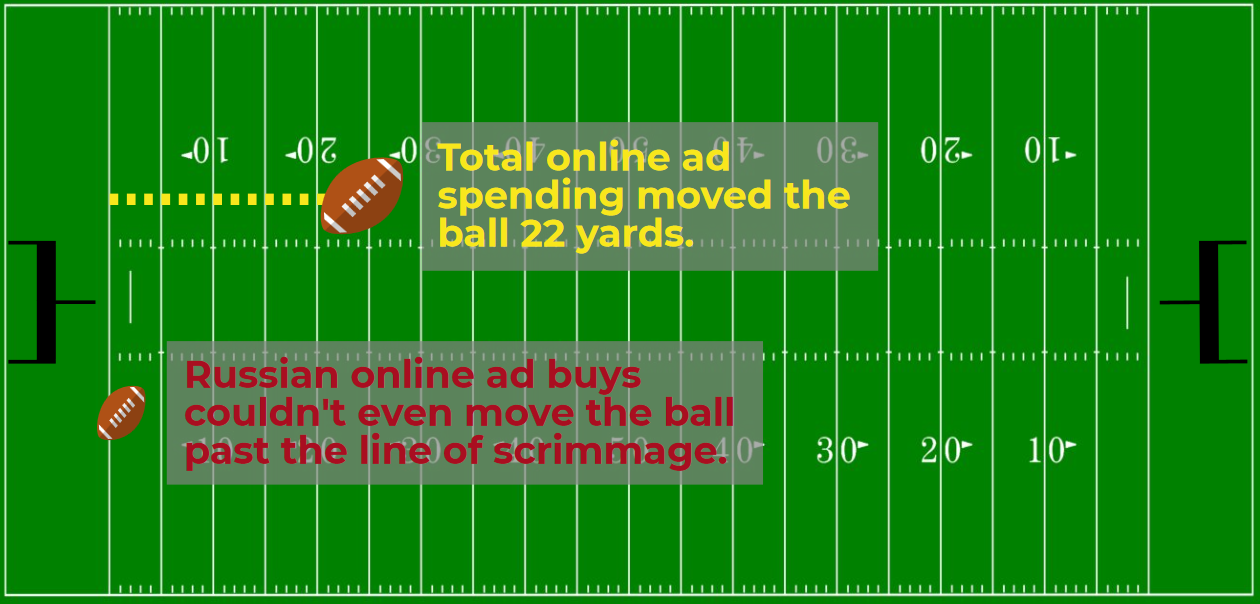

Before rushing to pass new laws, it’s important to consider to what extent deepfake technology truly presents new or unique challenges to democracy. As the Supreme Court has reiterated time and again, the answer to false and deceptive speech is counterspeech. Deepfakes have been around for years and have yet to even come close to impacting any election. Lawmakers must avoid the urge to sacrifice freedom of expression in an attempt to quash an unrealized problem. Government attempts to outlaw misleading political messages will do far more harm to democracy than the mere existence of such speech. Private institutions, the media, and individual citizens can expose falsehoods and decide the value of political expression themselves.

[1] See, e.g., Cal. Elec. Code § 20010; Defending Each and Every Person from False Appearances by Keeping Exploitation Subject to Accountability Act of 2019 (“DEEP FAKES Accountability Act”), H.R. 3230, 116th Cong. (1st Sess.) (2019); Korey Clark, “‘Deepfakes’ Emerging Issue in State Legislatures,” State Net Capitol Journal. Available at: https://www.lexisnexis.com/en-us/products/state-net/news/2021/06/04/Deepfakes-Emerging-Issue-in-State-Legislatures.page (June 4, 2021).

[2] United States v. Alvarez, 567 U.S. 709, 723 (2012) (referencing George Orwell’s Nineteen Eighty-Four).

[3] Id. at 727.