The Netflix documentary, The Social Dilemma, is making waves. Netflix describes the film as a “documentary-drama hybrid [that] explores the dangerous human impact of social networking, with tech experts sounding the alarm on their own creations.”

The main takeaway is that tech companies are stealthily controlling us by using sneaky product designs and thought-implanting algorithms. We, the hapless victims of Big Bad Tech, are the product. The goal of social media companies, the film claims, is to profit from modifying our behavior.

This thesis is riddled with problems. For one, it overstates its case. Presenting information – regardless of how persuasive or deceptive the presentation may be – isn’t mind control.

Early in the film, former Google employee Tristan Harris says, “two billion people will have thoughts that they didn’t intend to have because a designer at Google said this is how notifications work on that screen that you wake up to in the morning.” Harris claims Google has a moral responsibility to solve this “problem.”

But having thoughts you don’t intend to have is the essence of being alive and conscious. Unless you are a Buddhist monk with decades of expert meditation practice, your thoughts are regularly formed in response to external stimuli. When I’m watching a light-hearted television show and that commercial with sad dogs and Sarah McLachlan’s “Angel” comes on, I’m not thinking about hungry, desperate, disease-ridden animals because I independently thought it would be a fun interlude. I’m thinking about them because I just saw them on TV. That doesn’t make the A.S.P.C.A. some type of Svengali.

The talking heads of the film insist that what makes social media platforms so uniquely manipulative is their ability to “hack human psychology” and “hijack” the subconscious. They back up this central claim with such lackluster examples as Facebook’s photo tags, Gmail’s notifications, and YouTube’s video suggestions. Then, the tech insiders reveal the shocking truth to us plebes: these features are designed to drive user engagement.

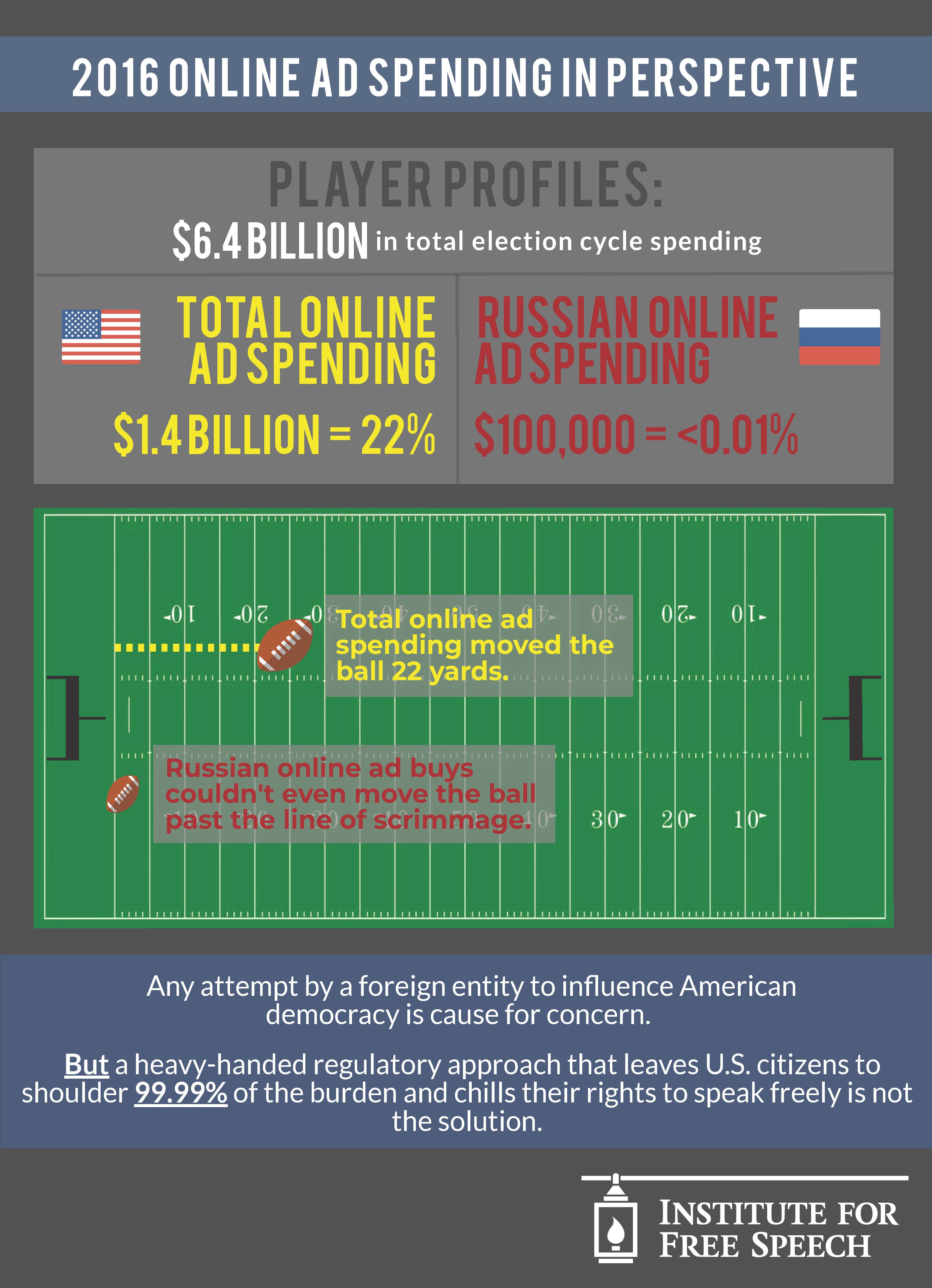

With all 2020 has to offer, it’s hard to believe that a company influencing consumers is considered a scandal. Companies have always used psychology to persuade current and potential users to do something in the company’s interest. A cleverly arranged grocery aisle, a sale price that isn’t really a discount, a celebrity endorsement, a beautifully photographed Big Mac, or a political ad that uses unflattering footage of a challenger all play on our psychology to push us towards buying a product or adopting an idea.

If anything, “addictive” product design at least offers an added benefit to the user. If Facebook’s photo-tagging feature makes the platform more enjoyable, does it really matter that the “real” motive is to increase engagement?

And, if the real danger of these persuasive techniques is their secrecy, doesn’t the widespread release of the documentary itself – and the subsequent media coverage – resolve that issue? There is no shortage of commentary about how scary and abusive tech platforms are – especially Facebook. For the adults among us, at least we are empowered to make the right decisions for ourselves and our families, right?

Wrong. These platforms need government regulation, according to the film. But it’s not clear what they are endorsing. There’s a suggestion of taxing data collection and talk of data privacy regulations, but other than that, regulation is only mentioned in generalities. With the looming threats to Section 230, it’s important to ask what exactly these former tech employees are proposing.

Online speech is crucial to free expression. With nothing more than an Internet connection, citizen journalists and amateur political pundits can speak truth to power. Online groups have been especially important during the pandemic, making it easier for Americans to exercise their freedom of association at a safe distance.

The film overdramatizes and oversimplifies a complex issue to create a caricature of evil tech companies that must be stopped at any cost. I could be wrong, but it seems like it might be priming us to accept some very bad policy in the near future.

For example, one person in the film contends that the “positive intermittent reinforcement” product designs of tech companies are like those in Las Vegas slot machines, creating zombie addicts and destroying lives. That argument isn’t new; it reminds me of the “violent video game” moral panic of the 90s. There will always be activists who sensationalize fears to limit expression and change policy. We can’t let the latest scare tactics get in the way of sound judgment. (Interestingly, Mike Masnick of Techdirt recently wrote about the documentary’s hypocrisy in using the same emotionally manipulative tactics that it criticizes social media platforms for employing.)

I wish I could say the rest of the documentary was better, but it only gets more hysterical from there. The film blames platforms for the spread of misinformation, echo chambers, and political polarization and argues these scourges lead to real offline harm like political violence, an oncoming civil war, and, if that wasn’t enough, the soon-to-be destruction of democracy. One interviewee says that we now “have less control over who we are and what we believe.”

In making these criticisms, the film strips individuals of their agency and casts the blame for nearly all of society’s ills on tech platforms instead of seeing them as mirrors to society. Much to my chagrin, Jacobin Magazine sums it up nicely:

The Social Dilemma is a slick horror story with an improbable redemptive ending. It lacks the scruple or subtlety to ask how much of the horror emanates from society, rather than the machine.